Events

This two hour workshop, delivered by Screen Yorkshire, aims to prepare you for the moment when things go wrong, as they tend to do on a regular basis on even the most established production. You’ll be presented with common problems that productions e

.png)

Embodying Histories is a series of online seminars exploring cross-disciplinary perspectives on embodied knowledge, research and practice in historical fields.

We are honoured to host Madeleine Modin, research archivist at Svenskt visarkiv - Centre for Swedish Folk Music and Jazz Research in Stockholm, for a deep dive into makers' choices of materials in traditional folk music instruments.

In this weeks Contemporary Music Research Seminar, we are joined by Sylianos Dimou (composer)

Join us for this weeks ACT Research Seminar

Join us for three 30 minute performances created by the 2nd Year Theatre: Writing, Directing and Performance students.

Join us for three 30 minute performances created by the 2nd Year Theatre: Writing, Directing and Performance students.

Join us for this years third year theatre festival!

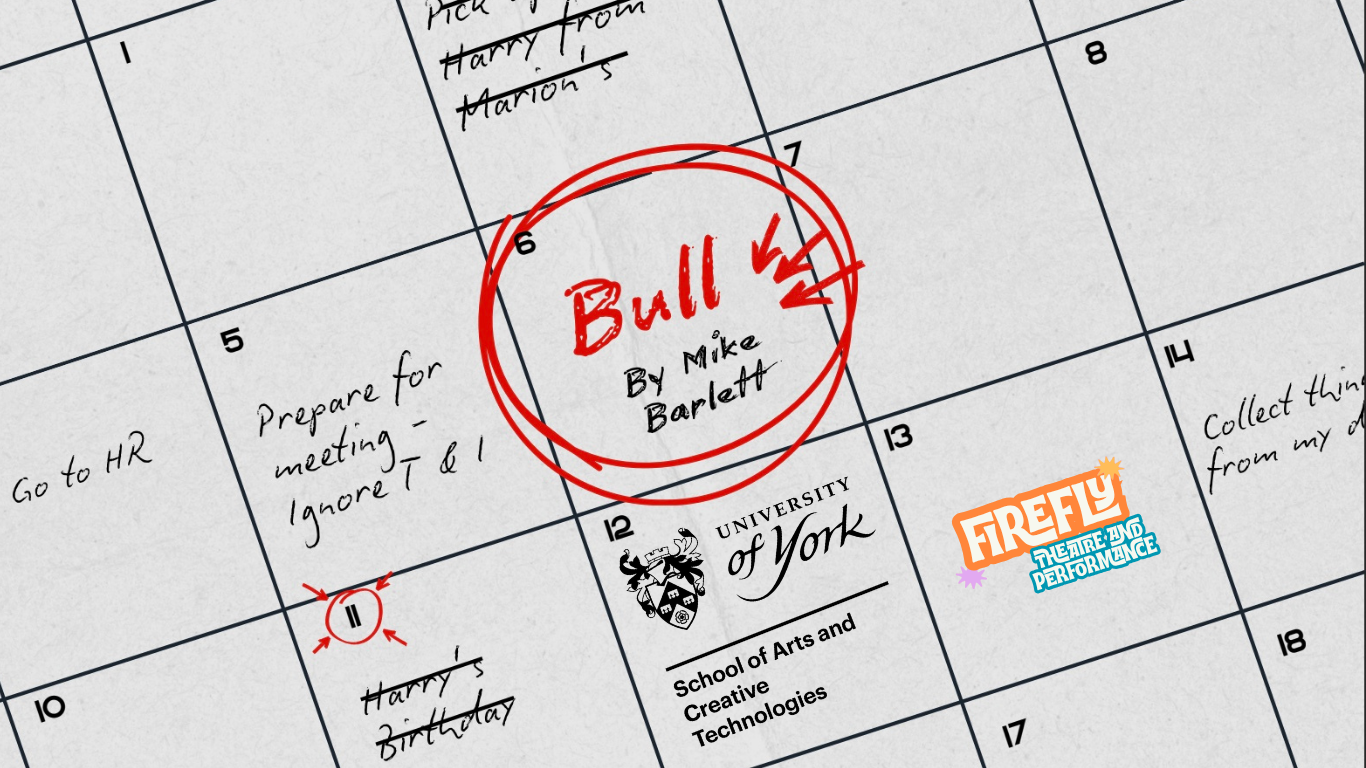

Toni, Thomas and Isobel all want to keep their jobs, but only two of them can. What begins as a professional discussion quickly becomes a calculated act of exclusion.

In a drug trial, participants Connie and Tristan fall in love. But are their feelings a consequence of the new antidepressant they are taking?

.png)

Shakers Restirred is a fast-paced, unique comedy - a cocktail of witty dialogue, topped off with hilarious caricatures familiar to your everyday life.

.png)

A desperate voicemail, a box of razor blades, and a packet of henna: Judith isn’t sure if she’s going to change her look or end it all. Oh, and she might be pregnant.

Imprisoned in an abandoned warehouse, a desperate group of failing actors is trapped in a dark experiment. After months of endlessly rehearsing George Bernard Shaw's Pygmalion with no director to guide them, some of the ensemble have disappeared..

In a drug trial, participants Connie and Tristan fall in love. But are their feelings a consequence of the new antidepressant they are taking?

Imprisoned in an abandoned warehouse, a desperate group of failing actors is trapped in a dark experiment. After months of endlessly rehearsing George Bernard Shaw's Pygmalion with no director to guide them, some of the ensemble have disappeared..

Toni, Thomas and Isobel all want to keep their jobs, but only two of them can. What begins as a professional discussion quickly becomes a calculated act of exclusion.

.png)

Shakers Restirred is a fast-paced, unique comedy - a cocktail of witty dialogue, topped off with hilarious caricatures familiar to your everyday life.

A desperate voicemail, a box of razor blades, and a packet of henna: Judith isn’t sure if she’s going to change her look or end it all. Oh, and she might be pregnant.

.jpg)

Hands On is a playful piano performance all about touch: the important nuances of touch, especially for the wonderful resonances of piano music, but also for how playing and listening to music touches us.

YorkConcerts

Join Tangram on a deep exploration of Chinese philosophy and Western classical music in a programme for Chinese and Western instruments that showcases how ancient divination practices have inspired music, ritual and performance for over 1000 years.

Our annual student showcase celebrates the breadth of musical talent from across the University. Join our exceptionally talented student performers as they take you on an exhilarating journey through the history of vocal music!

Experience the booming sounds of bronze gongs as Gamelan Sekar Petak offers a spirited celebration of traditional Javanese songs and contemporary compositions by Indonesian and York-based composers.

Julius Eastman's 1974-piece Femenine unfolds from a simple oscillation between two notes into a vast and varied sonic canvas shaped by its performers. It sits at the heart of this programme from The Chimera Ensemble, alongside new works by students.

Join us in celebrating the rich and diverse community of postgraduate performers here at York, showcasing a selection of their outstanding work from across the year.

University Choir and Symphony Orchestra join forces in the stunning setting of York Minster to provide a spectacular finale to our 2025/26 season, featuring works written at the very end of the lives of some of the greatest composers in history!