The morphoacoustics of human hearing

Practically all external sounds reach the human auditory system via the left and right ear canals. Despite being limited to only two channels of auditory information, we are capable of determining the direction of a sound source typically to within a few degrees. Acoustically, this impressive performance can largely be accounted for by the auditory spatial cues of inter-aural time difference, inter-aural level difference and the pinna (outer ear) cues.

The hearing system combines these cues to create in us a sense of the sound’s direction. The cues are embedded in a family of acoustic filters known as head-related transfer functions (HRTFs). When the pressure variations from a sound source are input to a left HRTF the output approximates the pressure variations at the left eardrum and a right HRTF produces the corresponding pressure variations at the right eardrum.

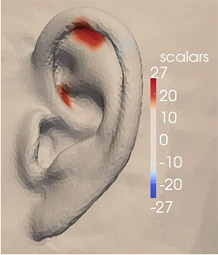

HRTFs are created as a result of the complex shape (or morphology) of the ear flaps, the head and upper torso. With sufficient knowledge of an individual’s morphology it is possible to calculate the associated unique set of HRTFs. This is currently a difficult and computationally intensive process, but nevertheless may ultimately be easier than measuring them acoustically. Finding an efficient way to estimate individualised HRTFs is viewed by many as the key to achieving widespread exploitation of 3D personal audio. Our research is contributing to this goal in several ways.

Members

- Tony Tew

- Jingbo Gao

- Chris Pike

- Alistair Hinde

- Laurence Hobden

Research