Motion tracked binaural sound over headphones

Design of immersive surround systems requires an understanding of the perceptual cues for sound source localisation. Any source at a given angle of incidence to the head will create subtle time and level difference cues at the ears and is subject to spectral shaping due to the pinnae. These cues are embedded in the Head Related Impulse Response (HRIR). For headphone reproduction of 3-D audio, filtering a source signal with a unique pair of HRIRs and presenting these filtered signals over headphones will ideally give the listener the impression that the source is located outside of the head and in the direction dictated by the filters. This process, known as binaural synthesis, has several challenges. First, HRIRs change from listener to listener, and the capture of large datasets of HRIRs is expensive and time consuming. As yet there is no assured method for selecting ‘near match’ HRIRs for an individual and generic HRIR sets are known to produce sound localisation errors, including front-back reversals, and lack of externalization. Binaural synthesis requires the inclusion of virtual room acoustics to aid externalisation and depth perception.

Motion tracked binaural sound over loudspeakers

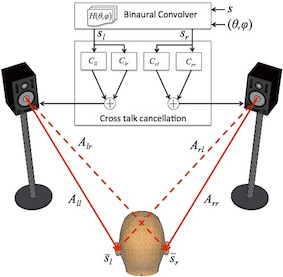

The presentation of binaural signals over loudspeakers creates additional challenges. First, there is inherent spectral colouration of the signals delivered due to room effects and filtering by the listener’s own head and pinnae. Transfer function compensation is required to preserve the presented localization cues, in particular elevation cues which strongly depend upon the frequency characteristics of the sounds presented at the listener’s inner ear. Second is the removal of acoustic crosstalk, which occurs when each ear receives signals from all loudspeakers present. In the case of a two-loudspeaker setup, crosstalk occurs when the left ear hears the signal from the right loudspeaker and vice versa. Signal processing is required to ensure an effective approximation of the binaural signals that would be presented to the listener via headphones.

This processing provides acoustic crosstalk cancellation and equalization (ACTCE). If done successfully the system effectively implements a set of virtual headphones and equalises room acoustics at the listener position. Any movements of the listener’s head exceeding 75-100mm have been shown to completely destroy the desired spatial effect and can damage the cancellation. This can be partially circumvented by including a head-tracking scheme and dynamically updating the system. SADIE addresses improvements in the matrix conditioning as well as reducing the size of the matrices involved under head-tracked conditions.

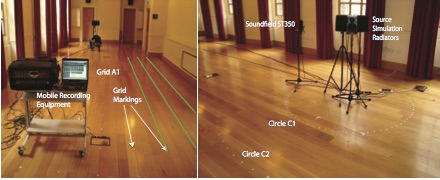

The equalisation process in ACTCE requires that the listening room response is known at the listener position. This requires methods for measuring stable, unoccupied acoustic environments. However, the response at the sweet spot becomes invalid if the listener changes position. The challenge then becomes characterising a listening zone around the room and updating the responses with tracked listener movement. A large number of acoustic measurements on the listening zone boundary is impractical. SADIE looks at methods for sparse measurement around the listening zone to effectively characterise the soundfield.